Artificial intelligence has moved from the lab to the core of business operations. Retailers use it to predict demand. Hospitals employ it to support diagnosis. Factories rely on it to detect faults in real time.

Yet organisations rush to adopt AI, many hit the same roadblock: their existing IT infrastructure was never built for AI workloads. This disconnect between ambition and capability forces a critical choice – cloud vs on premise – that impacts everything from performance to compliance.

In the AI era, this decision isn’t just about cost or convenience. It’s about whether your systems can handle the data demands, processing power, and security requirements of intelligent applications.

To understand why cloud vs on-premise decisions matter more than ever — and how hybrid cloud infrastructure is emerging as a practical solution.

Let’s start with two real-world examples that reveal the fundamental challenges companies face today.

Two Real-World Examples That Reveal the Problem with AI Infrastructure

Let’s look at two common scenarios that highlight why AI infrastructure basics are so different from traditional IT setups.

Example 1: The Retail Chain Struggling to Make AI Useful

A retail chain launched an AI tool in the cloud to predict what products each store would need next week. The cloud was fast to set up, easy to scale, and didn’t require buying new hardware — perfect for testing new ideas.

But there was a problem: all their sales history was stored on old on-premise servers in their own data centre.

Every time the AI needed data, the system slowed down. Predictions came late — often after managers had already placed orders. Trust in the AI faded fast.

What went wrong? The AI worked, but the infrastructure didn’t. AI needs instant access to data, but here the data was stuck in old systems. This is a common cloud migration challenge: your AI is in the cloud, but your data isn’t.

Example 2: The Hospital That Wants AI but Cannot Use the Cloud

A hospital wanted to use AI to read MRI scans faster, helping doctors make quicker diagnoses.

But medical privacy laws required all patient data to stay on-premise. Moving it to the cloud was not allowed.

The hospital’s servers were built to store files, not run AI. They didn’t have the GPU power needed for AI analysis. Each scan took too long to process, slowing down patient care.

The result: The hospital saw AI’s value but couldn’t use the cloud. Their on-premise limitations – outdated hardware and strict rules – kept them from moving forward.

The Common Issue

Both companies faced the same core problem: their IT infrastructure wasn’t built for AI.

- The retailer struggled with data movement.

- The hospital struggled with computing power.

AI isn’t just another app — it changes what your systems need to do. And that forces every organisation to ask: where should AI workloads run?

The Real Problem IT Teams Are Facing Today

Most IT systems were built for traditional tasks like email, databases, and internal software. These jobs were steady, predictable, and could handle a bit of delay.

AI workloads are different.

They push infrastructure in new ways. Think of AI infrastructure basics like this – they demand:

- Specialised hardware like GPUs, not just standard servers

- Constant data flow — huge amounts of information moving quickly

- Near-instant results — delays break the AI’s usefulness

- Tighter security & compliance — especially with sensitive data

Let’s revisit our two examples:

- Retailer: Their bottleneck was data movement between the cloud and on-premise

- Hospital: Their bottleneck was computing power stuck on outdated on-premise hardware

Both hit a wall because their systems weren’t designed for AI’s unique needs.

The takeaway is clear: AI isn’t just another tool you add to your existing setup. It changes how your entire infrastructure must operate – where data lives, how it moves, and what hardware processes it.

So, how do you build for AI? That brings us to the core decision: cloud, on-premise, or something in between.

Cloud vs On-Premise: What Each Does Well and Where They Struggle

To make the right choice for AI, you need to understand what each environment does best – and where it falls short. Let’s break it down simply.

1. Cloud, Explained Simply

Cloud platforms let you rent computing power over the internet. They’re fast to start, easy to scale up or down, and require no upfront hardware investment.

For AI, cloud works well when you need to:

- Experimenting quickly with new AI models

- Train AI systems using powerful, on-demand GPUs

- Scale instantly during peak processing times

This is exactly why the retail company started in the cloud.

But cloud migration challenges appear when:

- AI workloads run continuously, and costs can spike

- Performance lags if your data lives elsewhere (like the retailer’s on-premise servers)

- Compliance rules may block sensitive data from being stored in the cloud

2. On-Premise, Explained Simply

On-premise means you own and manage your own servers, usually in your own data centre. You have full control, predictable costs, and direct oversight of your data.

On-premise works well for AI when:

- Data is highly sensitive (like patient health records)

- Compliance rules are strict and require local data storage

- You need predictable, tightly controlled performance

This is why hospitals, banks, and government agencies often keep workloads on-premise.

But on-premise limitations are real:

- High upfront cost to buy and set up hardware

- Slow to upgrade — no instant access to the latest GPUs

- Hard to scale — adding capacity takes time and money

These on-premise limitations are exactly what held the hospital back.

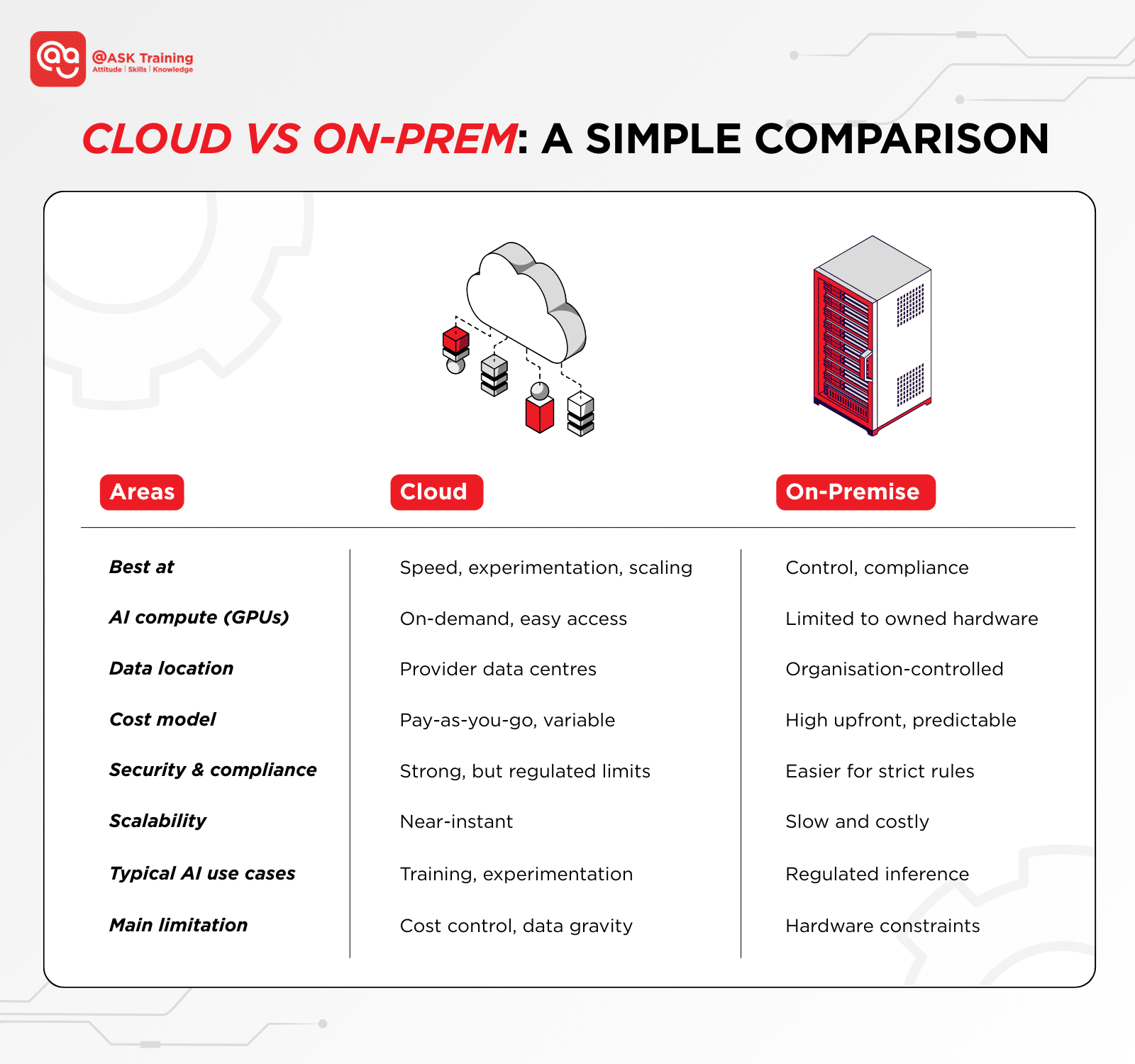

Cloud vs On-Prem: A Simple Comparison

So, which is better for AI?

The answer isn’t one or the other – it’s about using each where it fits best. And that’s why many organisations are now turning to a third path: hybrid cloud infrastructure.

Why Hybrid Cloud Infrastructure Is Becoming the Default

Since cloud and on-premise each solve different parts of the AI puzzle, more organisations are choosing a middle path: hybrid cloud infrastructure.

Hybrid simply means: mixing both environments to get the best of each.

- Keeping sensitive data real-time tasks on-premise for control and compliance

- Use the cloud for scalable training, testing, and heavy computation

Let’s revisit our examples with a hybrid solution:

- Retailer: Sales data stays on-premise, but AI models train in the cloud using secure, scheduled data syncs.

- Hospital: Real-time MRI analysis runs on-premise to protect patient data, while the cloud is used to refine and improve the AI model with anonymised data.

But what about when even hybrid isn’t fast enough — when decisions need to be made in milliseconds? That’s where edge computing comes in.

Edge Computing Simplified

Edge computing takes this idea a step further by moving AI processing directly to where the data is created – like in a factory, a store, or a medical device.

Instead of sending everything to a central system, local devices make instant decisions.

Edge computing is useful when:

- Speed is critical — think self-driving cars or robotic surgery

- Internet is unreliable — remote sensors or offshore equipment

- Decisions must be instant — security cameras or assembly line monitors

You’ll find edge setups in smart cameras, medical monitors, and industrial IoT sensors.

Why Hybrid and Edge are the Future of AI Infrastructure

Hybrid and edge approaches exist for one reason: AI needs speed, security, and flexibility all at once.

No single environment can provide it all – but together, they can.

- Cloud for scale and experimentation

- On-premise for control and compliance

- Edge for speed and real-time action

This blended approach is how modern organisations are turning AI infrastructure challenges into opportunities.

How AI Is Changing IT Roles in the AI Era

AI isn’t just changing technology; it’s transforming IT careers. In Singapore, we’re moving beyond generalist sysadmin roles into specialised positions like AI Platform Engineer.

The job is becoming more technical and strategic as we manage high-density hardware and autonomous orchestration to prevent GPU starvation and control the hidden Inference Tax — the major cost of keeping AI models running.

(Source: Microsoft)

New IT responsibilities now include:

- Orchestrating AI workflows across cloud, on-premise, and edge — not just moving data, but enabling cloud-to-edge fluidity

- Preventing GPU starvation by ensuring AI models have the compute power they need, when they need it

- Managing the Inference Tax by optimising where and how AI runs to balance cost, speed, and compliance

- Designing autonomous systems that self-manage performance, scaling, and security

Think back to our examples:

- The retailer needed someone who could design a fluid data pipeline between cloud AI and on-premise sales systems.

- The hospital required an expert who could deploy and manage local GPU clusters for real-time diagnostics while meeting MOH guidelines.

The shift is clear: IT roles are moving from maintaining servers to architecting intelligent hybrid platforms. According to the World Economic Forum, professionals with these hybrid AI skills now command a “skill premium” in the job market.

In short: We’re no longer just IT support; we’re the builders of Singapore’s AI-ready infrastructure.

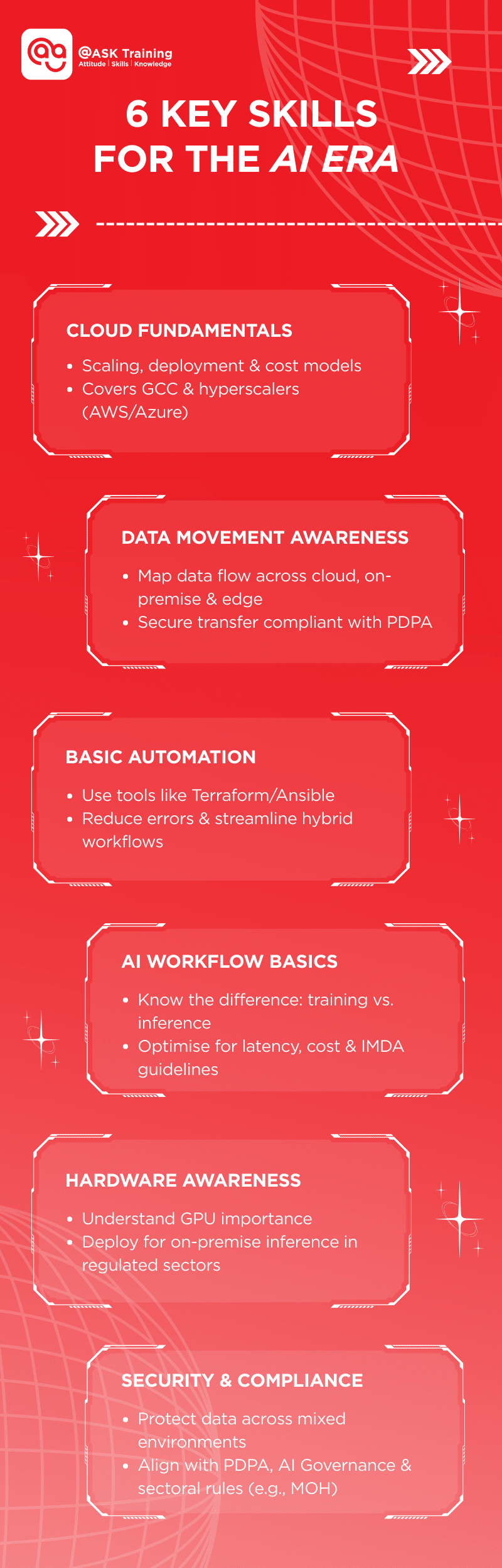

The Skills IT Professionals Need to Stay Relevant

IT professionals do not need to become AI researchers. They do need broader infrastructure skills.

The most important areas to focus on include:

- Cloud fundamentals: Understanding how scaling, deployment, and cost models work in environments like Government Commercial Cloud (GCC) and hyperscalers such as AWS or Azure.

- Data movement awareness: Knowing where data lives, how it flows, and how to move it securely between cloud, on-premise data centres, and edge while adhering to PDPA.

- Basic automation: Using tools like Terraform or Ansible to reduce manual setup, prevent errors, and streamline AI workflows in hybrid setups.

- AI workflow basics: Understanding the difference between AI training and inference, and where each should happen to optimise for latency and cost under IMDA guidelines.

- Hardware awareness: Knowing why GPUs matter, where they’re needed (e.g., for on-premise inference in sensitive sectors), and how to manage them in hybrid setups.

- Security and compliance: Protecting data across mixed environments while meeting PDPA, Model AI Governance Framework, and sector-specific expectations like those from MOH

Building these competencies doesn’t just keep IT teams relevant—it prepares them to lead in an era where infrastructure for AI workloads is the foundation of innovation.

A Simple Framework to Choose Cloud, On-Prem, or Hybrid

You don’t need complex models to make the right infrastructure choice for AI. Use this straightforward guide:

- Choose cloud when speed, experimentation, and growth matter most

- Choose on-premise when data is sensitive, regulated or must stay locally controlled

- Choose edge when decisions must happen instantly, with no room for delay

- Choose hybrid when different parts of your AI workflow have different needs – like secure data on-premise and scalable training in the cloud

Remember: Most real organisations – like the retailer and hospital in our examples – end up combining multiple approaches. Your solution might be hybrid today and include edge tomorrow.

Wrapping Up

AI is not just another technology upgrade. It reshapes how IT infrastructure must work.

Cloud vs on premise is no longer a binary choice. Each plays a critical role in supporting AI systems, with hybrid cloud infrastructure and edge computing emerging as the practical middle ground.

Here’s a quick recap:

As AI adoption accelerates in Singapore and beyond, IT professionals who understand infrastructure for AI workloads will be increasingly valuable. They won’t just maintain systems, they’ll design intelligent environments where AI can thrive.

The future belongs to those who can design systems that balance speed, security, and scalability in an AI-driven world.

Ready to Build Your Future in AI-Ready Infrastructure

Whether you’re looking to deepen your understanding of IT infrastructure or master the tools needed for cloud, automation, and emerging tech, ASK Training offers structured learning paths designed for Singapore’s evolving digital landscape.

Explore our IT Infrastructure & Service Management courses:

- IT Infrastructure and Operations

- IT Infrastructure Planning and Optimisation

- IT Disaster Recovery and Business Continuity

- And more

Dive into Cloud, Automation & Emerging Tech bundle courses:

- Cloud Computing

- IT Infrastructure Automation and Orchestration

- Emerging Technologies and Trends

- Enterprise Architecture and Design

Equip yourself with the skills to design, manage, and secure the intelligent systems of tomorrow!

Related Courses

◆◆◆